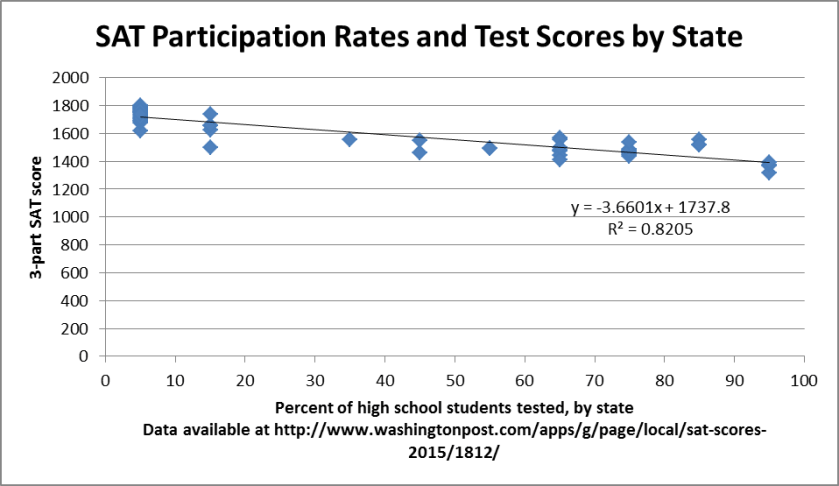

Yesterday, I wrote about how it was likely the case that some of the decline in SAT scores was due to states and districts requiring students to take the SAT. At the request of several esteemed readers, I did a back-of-the-envelope calculation to see how much of the change in SAT scores over the last five years is due to states requiring all students to take the SAT (hat tip to Kan-Ye Test (love the name!) for pointing me to the data). Between 2011 and 2015, Delaware, the District of Columbia, and Idaho moved from having some of their students take the SAT (14,765) to having all of their students (32,236) take the SAT. Meanwhile, the average SAT score fell from 1500 to 1490.

Based on 2011 state-by-state data, I recalculated average 2015 SAT scores while substituting 2011 participation levels and scores for 2015 levels and scores in those three states. Erasing the additional 17,471 test-takers (and their average SAT of 1292) from those three states was enough to raise the average SAT score of 1.6 million other test-takers by 2.1 points. These three states explain approximately 21% of the decline in SAT scores, as outlined below.

| Required SAT states explain at least 21% of the decline in SAT scores since 2011 | ||

| States | Num. students | Avg. SAT |

| DC, DE, & ID (2011) | 14,765 | 1445 |

| DC, DE, & ID (2015) | 32,236 | 1362 |

| All others (2015) | 1,614,887 | 1493 |

| Total (using ’11 DC, DE, & ID) | 1,629,652 | 1492 |

| Total (using ’15 DC, DE, & ID) | 1,647,123 | 1490 |

I’d still love to see the College Board pull out data from the districts which moved to require the SAT, as it’s entirely possible that half of the decline in SAT scores could just be due to students who were required to take the test. They’ve got the data, and I hope they take a look!