On December 3, a House of Representatives subcommittee held a hearing entitled, “Keeping College Within Reach: Strengthening Pell Grants for Future Generations.” Here is my take on the hearing:

Author: Robert

Performance Indicators and College Ratings

This has been a busy part of the semester from both the teaching and research sides of work. But I was able to go to part of the Association for the Study of Higher Education (ASHE) conference, which included a great panel discussion on performance indicators and college ratings. Rather than write a typical blog post, I’m giving Storify a shot. Below is the link to my story:

http://storify.com/rkelchen/performance-indicators-and-college-ratings#

Take a look and let me know what you think!

Let’s Track First-Generation Students’ Outcomes

I’ve recently written about the need to report the outcomes of students based on whether they received a Pell Grant during their first year of college. Given that annual spending on the Pell Grant is about $35 billion, this should be a no-brainer—especially since colleges are already required to collect the data under the Higher Education Opportunity Act. Household income is a strong predictor of educational attainment, so people interested in social mobility should support publishing Pell graduation rates. I’m grateful to get support from Ben Miller of the New America Foundation on this point.

Yet, there has not been a corresponding call to collect information based on parental education, even though there are federal programs targeted to supporting first-generation students. The federal government already collects parental education on the FAFSA, although the choice of “college or beyond” may be unclear. (It would be simple enough to clarify the question if desired.)

My proposal here is simple: track graduation rates by parental education. It can be easily done through the current version of IPEDS, although the usual caveats about IPEDS’s focus on first-time, full-time students still applies. This could be another useful data point for students and their families, as well as policymakers and potentially President Obama’s proposed college ratings. Collecting these data shouldn’t be an enormous burden on institutions, particularly in relationship to their Title IV funds received.

Let’s continue to work to improve IPEDS by collecting more useful data, and this should be a part of the conversation.

The Value of “Best Value” Lists

I can always tell when a piece about college rankings makes an appearance in the general media. College administrators see the piece and tend to panic while reaching out to their institutional research and/or enrollment management staffs. The question asked is typically the same: why don’t we look better in this set of college rankings? As the methodologist for Washington Monthly magazine’s rankings, I get a flurry of e-mails from these panicked analysts trying to get answers for their leaders—as well as from local journalists asking questions about their hometown institution.

The most recent article to generate a burst of questions to me was on the front page of Monday’s New York Times. It noted the rise in lists that look at colleges’ value to students instead of the overall performance on a broader set of criteria. (A list of the top ten value colleges across numerous criteria can be found here.) While Washington Monthly’s bang-for-the-buck article from 2012 was not the first effort at looking at a value list (Princeton Review has that honor, to the best of my knowledge), we were the first to incorporate a cost-adjusted performance measure that accounts for student characteristics and the net price of attendance.

When I talk with institutional researchers or journalists, my answer is straightforward. To look better on a bang-for-the-buck list, colleges have to either increase their bang (higher graduation rates and lower default rates, for example) or lower their buck (with a lower net price of attendance). Prioritizing these measures does come with concerns (see Daniel Luzer’s Washington Monthly piece), but the good most likely outweighs the bad.

Moving forward, it will be interesting to see how these lists continue to develop, and whether they are influenced by the Obama Administration’s proposed college ratings. It’s an interesting time in the world of college rankings, ratings, and guides.

Two and a Half Cheers for Prior Prior Year!

Earlier this week, the National Association of Student Financial Aid Administrators (NASFAA) released a report I wrote with Gigi Jones of NASFAA on the potential to use prior prior year income data (PPY) in determining students’ financial aid awards. Compared to the current policy of prior year (PY) data, students could file the FAFSA up to a year earlier than under current law. (See this previous post for a more detailed summary of PPY.)

Although the use of PPY could advance the timeline for financial aid notification, this could also have the effect of changing some students’ aid packages. For example, if a dependent student’s family had a large decrease in family income the year before entering college, the financial aid award would be more generous under PY. Other students’ aid packages would be more generous under PPY. Although we might expect that the number of aid increases and decreases from a move to PPY would balance each other out, the existence of professional judgments (in which financial aid officers can adjust students’ aid packages based on unusual circumstances) complicates that analysis. As a result, it’s possible that PPY could increase program costs in addition to the burden faced by financial aid offices.

To examine the feasibility and potential distributional effects of PPY, we received student-level FAFSA data from nine colleges and universities from the 2007-08 through the 2012-13 academic years. We then estimated the expected family contribution (EFC) for students using PY and PPY data to see how much Pell Grant awards would vary by the year of financial data used. (This exercise also gave me a much greater appreciation for how complicated it truly is to calculate the EFC…and how much data is currently needed in the FAFSA!)

The primary result of the study is that about two-thirds of students would see the exact same Pell award using PPY as they would using PY. These students tend to fall into two groups—students who would never be eligible for the Pell (and are largely filing the FAFSA to be eligible for federal student loans) and those with zero EFC. Students near the Pell eligibility threshold are the bigger concern, as about one in seven students would see a change in their Pell award of at least $1,000 under PPY compared to PY. However, many of these students would never know their PY eligibility, somewhat reducing concerns about the fairness of the change.

To me, the benefits of PPY are pretty clear. So why two and a half cheers? I have three reasons to knock half a cheer off my assessment of a program that is still quite promising:

(1) We don’t know much about the burden of PPY on financial aid offices. When I’ve presented earlier versions of this work to financial aid administrators, they generally think that the additional burden of professional judgments (students appealing their aid awards due to extenuating circumstances) won’t be too bad. I hope they’re right, but it is worth a note of caution going forward.

(2) If students request professional judgments and are successful in getting a larger Pell award, program costs will increase. Roughly 5-7% of students would see their Pell fall by $1,000 or more under PPY. If about 2% of the Pell population is successful (200,000 students), program costs could rise by something like $300-$500 million per year. Compared to a $34 billion program budget, that’s noticeable, but not enormous.

(3) A perfectly implemented PPY program would let students know their eligibility for certain types of financial aid a year earlier than current rules, so as early as the spring of a traditional-age student’s junior year of high school. While that is an improvement, it may still not be early enough to sufficiently influence students’ academic and financial preparation for college. Early commitment and college promise programs reach students at earlier ages, and thus have more potential to be successful.

Even after noting these caveats, I would like to see PPY get a shot at a demonstration program in the next few years. If it can help at least some students at a reasonable cost, let’s give it a try and see if it does induce students to enroll and persist in college.

Free the Pell Graduation Data!

Today is an exciting data in my little corner of academia, as the end of the partial government shutdown means that federal education datasets are once again available for researchers to use. But the most exciting data to come out today is from Bob Morse, rankings guru for U.S. News and World Report. He has collected graduation rates for Pell Grant recipients, long an unknown for the majority of colleges. Despite the nearly $35 billion per year we spend on the Pell program, we have no idea what the national graduation rate is for Pell recipients. (Richard Vedder, economist of higher education at Ohio University, has mentioned a ballpark estimate of 30%-40% in many public appearances, but he notes that is just a guess.)

Morse notes in his blog post that colleges have been required to collect and disclose graduation rates for Pell recipients since the 2009 renewal of the Higher Education Act. I’ve heard rumors of this for years, but these data have not yet made their way into IPEDS. I have absolutely no problems with him using the data he collects in the proprietary U.S. News rankings, nor do I object to him holding the data very tight—after all, U.S. News did spend time and money collecting it.

However, given that the federal government requires that Pell graduation rates be collected, the Department of Education should collect this data and make it freely and publicly available as soon as possible. This would also be a good place for foundations to step in and help collect this data in the meantime, as it is certainly a potential metric for the President’s proposed college ratings.

Update: An earlier version of this post stated that the Pell graduation data are a part of the Common Data Set. Bob Morse tweeted me to note that they are not a part of that set and are collected by U.S. News. My apologies for the initial error! He also agreed that NCES should collect the data, which only understates the importance of this collection.

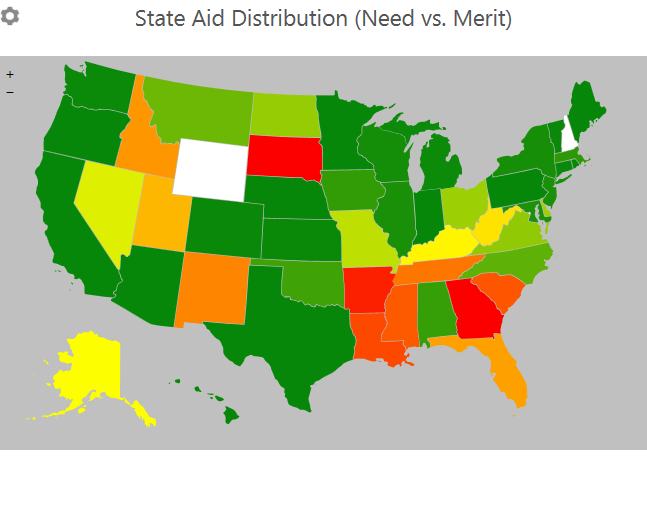

State Need and Merit Aid Spending

I’m fortunate to be teaching a class in higher education finance this semester, as it’s a class that I greatly enjoy and is also intertwined with my research interests. I’m working on slides for a lecture on grant aid (both need-based and merit-based) in the next few weeks, which involves creating graphics about trends in aid. In this post, I’m sharing two of my graphics about state-level financial aid.

States have taken different philosophies regarding financial aid. Some states, particularly in the South, have focused more of their resources on merit-based aid, rewarding students with strong pre-college levels of academic achievement. Other states have put their resources into need-based aid, such as Wisconsin and New Jersey. Yet others have chosen to keep the cost of college low instead of providing aid to students.

The two charts below demonstrate the states’ differences in philosophies. The state-level data come from the National Association of State Student Aid & Grant Programs (NASSGAP) from the 2011-12 academic year. The first chart shows the percentage of funds given to need-based aid (green) and merit-based aid:

Two states currently have no need-based aid (Georgia and South Dakota), and six other states allocate 75% or more of state aid to merit-based programs. On the other hand, nine states only have need-based aid programs and 16 more allocate 90% or more to need-based aid. Two states (New Hampshire and Wyoming) did not report having student aid programs in 2011-12.

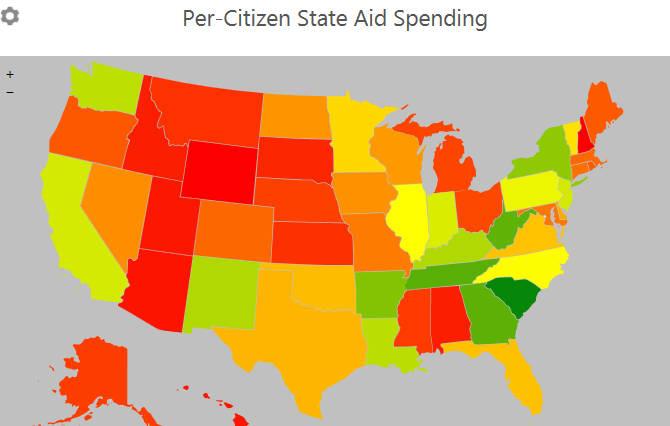

The second chart measures the intensity of spending on state-level student aid. I divide overall spending by the state’s population in 2012, as estimated by the Census Bureau. States with more spending on aid per student are in green, while lower-spending states are in red:

South Carolina leads the way in state student aid, with nearly $69 per resident; four other Southern states provide $50 or more per resident. The other extreme sees 15 states spending less than $10 per person on aid.

Notably, states with more of an emphasis on merit aid spend more on per-resident aid. The correlation between the percentage of funds allocated to need-based aid and per-resident spending is -0.33, suggesting that merit-based programs (regardless of their effectiveness) are more capable of generating resources for students.

I’m looking forward to using these graphics (and several others) in my class on grant aid, as the class has been so much fun this semester. I hope my students feel the same way!

What Should Be in the President’s College Ratings?

President Obama’s August announcement that his administration would work to develop a college rating system by 2015 has been the topic of a great deal of discussion in the higher education community. While some prominent voices have spoken out against the ratings system (including my former dissertation advisor at Wisconsin, Sara Goldrick-Rab), the Administration appears to have redoubled its efforts to create a rating system during the next eighteen months. (Of course, that assumes the federal government’s partial shutdown is over by then!)

As the ratings system is being developed, Secretary Duncan and his staff must make a number of important decisions:

(1) Do they push for ratings to be tied to federal financial aid (requiring Congressional authorization), or should they just be made available to the public as one of many information sources?

(2) Should they be designed to highlight the highest-performing colleges, or should they call out the lowest-performing institutions?

(3) Should public, private nonprofit, and for-profit colleges be held to separate standards?

(4) Should community colleges be included in the ratings?

(5) Will outcome measures be adjusted for student characteristics (similar to the value-added models often used in K-12 education)?

After these decisions have been made, then the Department of Education can focus on selecting possible outcomes. Graduation rates and student loan default rates are likely to be a part of the college ratings, but what other measures could be considered—both now and in the future? An expanded version of gainful employment, which is currently used for vocationally-oriented programs, is certainly a possibility, as is some measure of earnings. These measures may be subject to additional legal challenges. Some measure of cost may also make its way into the ratings, rewarding colleges that operate in a more efficient manner.

I would like to hear your thoughts (in the comments section below) about whether these ratings are a good idea and what measures should be included. And when the Department of Education starts accepting comments on the ratings, likely sometime in 2014, I encourage you to submit your thoughts directly to them!

Associate’s Degree Recipients are College Graduates

Like most faculty members, I have my fair share of quirks, preferences, and pet peeves. While some of them are fairly minor and come from my training (such as referring to Pell Grant recipients as students from low-income families instead of low-income students, since most students have very little income of their own), others are more important because of the way they incorrectly classify students and fail to recognize their accomplishments.

With that in mind, I’m particularly annoyed by a Demos piece with the headline “Since 1991, Only College Graduates Have Seen Their Income Rise.” This claim comes from Pew data showing that only households headed by someone with a bachelor’s degree or more had a real income gain between 1991 and 2012, while households headed by those with less education lost ground. However, this headline implies that students who graduate with associate’s degrees are not college graduates—a value judgment that comes off as elitist.

According to the Current Population Survey, over 21 million Americans have an associate’s degree, with about 60% of them being academic degrees and the rest classified as occupational. This is nearly half the size of the 43 million Americans whose highest degree is a bachelor’s degree. Many of these students are the first in their families to even attend college, so an associate’s degree represents a significant accomplishment with meaning in the labor market.

Although most people in the higher education world have an abundance of degrees, let’s not forget that our college experiences are becoming the exception rather than the norm. I urge writers to clarify their language and recognize that associate’s degree holders are most certainly college graduates.

Improving Data on PhD Placements

Graduate students love to complain about the lack of accurate placement data for students who graduated from their programs. Programs are occasionally accused of only reporting data for students who successfully received tenure-track jobs, and other programs apparently do not have any information on what happened to their graduates. Not surprisingly, this can frustrate students as they try to make a more informed decision about where to pursue graduate studies.

An article in today’s Chronicle of Higher Education highlights the work of Dean Savage, a sociologist who has tracked the outcomes of CUNY sociology PhD recipients for decades. His work shows a wide range of paths for CUNY PhDs, many of whom have been successful outside tenure-track jobs. Tracking these students over their lifetimes is certainly a time-consuming job, but it should be much easier to determine the initial placements of doctoral degree recipients.

All students who complete doctoral degrees are required to complete the Survey of Earned Doctorates (SED), which is supported by the National Science Foundation and administered by the National Opinion Research Center. The SED contains questions designed to elicit a whole host of useful information, such as where doctoral degree recipients earned their undergraduate degrees (something which I use in the Washington Monthly college rankings as a measure of research productivity) and information about the broad sector in which the degree recipient will be employed.

The utility of the SED could be improved by clearly asking degree recipients where their next job is located, as well as their job title and academic department. The current survey asks about the broad sector of employment, but the most relevant response for postgraduate plans is “have signed contract or made definite commitment to a “postdoc” or other work. Later questions do ask about the organization where the degree recipient will work, but there is no clear distinction between postdoctoral positions, temporary faculty positions, and tenure-track faculty positions. Additionally, there is no information requested about the department in which the recipient will work.

My proposed changes to the SED are little more than tweaks in the grand scheme of things, but have the potential to provide much better data about where newly minted PhDs take academic or administrative positions. This still wouldn’t fix the lack of data on the substantial numbers of students who do not complete their PhDs, but it’s a start to providing better data at a reasonable cost using an already-existing survey instrument.

Is there anything else we should be asking about the placements of new doctoral recipients? Please let me know in the comments section.